The National Institute of Standards and Technology (NIST) just unveiled new cybersecurity controls. These are specifically for artificial intelligence (AI) systems. This marks a big step in securing and governing AI tech globally. Governments and experts are grappling with AI’s complexities. There’s a critical need for strong frameworks. These frameworks will manage risks while fostering innovation. NIST AI cyber controls address these urgent needs.

Here are key takeaways from NIST’s latest initiative:

- NIST released new cybersecurity controls for AI systems. They address unique AI vulnerabilities.

- This move reflects growing concerns. Discussions among government bodies and experts cover AI governance and deployment.

- AI implementation faces challenges. These include procurement hurdles for critical resources in government.

- Leading academics are contributing to AI technology governance. They highlight the urgency of comprehensive frameworks.

- The new NIST AI cyber controls aim to bridge a gap. They connect AI’s potential with the need for secure and ethical adoption.

Artificial intelligence continues its rapid evolution. It moves from theory to practical applications. Robust security measures have become paramount. The recent unveiling of AI-specific cyber controls by NIST is a pivotal development. These controls address unique AI system vulnerabilities and risks. Traditional cybersecurity paradigms may not fully cover AI complexities. These include machine learning, data dependencies, and autonomous decisions. These controls are part of the broader NIST AI cyber controls initiative.

NIST’s announcement shows a shift in focus. It moves beyond AI’s excitement to a pragmatic assessment. The agency’s work is crucial for both public and private sectors. It helps them responsibly adopt AI. It provides a needed framework for navigating challenges. This initiative comes with a palpable sense of urgency. This is especially true within government operations regarding AI’s impact.

Addressing Government AI Risks with NIST AI Cyber Controls

Deploying AI within government has unique challenges. It also carries potential pitfalls. The question, “Government AI: What could go wrong?” captures this concern. This extends beyond technical glitches. It covers policy, ethics, and operational resilience. For example, officials found an “unprecedented workaround.” This allowed invigorating domestic rare earth mining. While seemingly tangential, it shows governments’ efforts. They secure foundational AI components. This highlights complex dependencies. It also shows potential regulatory shortcuts for tech advantage.

Such workarounds might speed up resource acquisition. Yet, they raise questions about transparency. Long-term sustainability is also a concern. Broader implications for international trade and environmental policy arise. For AI, hardware and data infrastructure security are critical. They are as vital as the software. The push for domestic rare earth sourcing is strategic. It secures supply chains for advanced computing, including AI. This reduces geopolitical risks. But, it adds new procurement complexities.

Stakes are very high. Consider AI’s integration into critical infrastructure. This includes defense systems and public services. The robust NIST AI cyber controls are indispensable here. They mitigate risks. These range from data breaches and algorithmic bias. They also cover system failures and malicious manipulation. Without clear guidelines, rapid AI adoption could backfire. It might introduce new cyberattack vectors. Or, it could lead to unintended societal consequences.

The Academic and Expert Perspective on AI Governance

Discussions about AI governance are not just for government agencies. They are a major focus for leading academic institutions. Experts are also heavily involved. Harvard’s Jonathan Zittrain is a prominent voice. He specializes in internet law and governance. He is speaking on AI technology governance. His participation highlights interdisciplinary thought’s critical role. It shapes AI’s future. Zittrain’s work explores technology, law, and society. His insights are very relevant to current challenges.

“The advances and potential use cases for adopting artificial intelligence (AI) are truly transformative,” a NIST spokesperson might elaborate. “But with great power comes great responsibility. Our new controls are a testament to our commitment. They ensure AI is adopted securely and ethically. They protect both data and democratic values.”

Figures like Zittrain show AI governance isn’t just technical. It’s a complex societal challenge. It needs input from legal scholars, ethicists, sociologists, and policymakers. Debates often cover accountability for AI decisions. They also focus on preventing algorithmic discrimination. Ensuring transparency in AI models is key. Establishing human oversight mechanisms is vital. Comprehensive governance frameworks, supported by technical controls, aim to address these issues.

Academic discourse provides a crucial sounding board for new policies. It offers critical analysis. It also proposes innovative solutions. It helps inform public understanding and engagement. This is vital for building trust in AI technologies. The theme “Past Informs Present” from the Chautauqua Lecture Series applies. Zittrain is speaking there. Lessons from the internet’s early development are relevant. These include privacy, security, and misinformation. They are now applied to nascent AI regulation.

Integrating Security and Innovation with NIST AI Cyber Controls

Balancing innovation with strict security is delicate. Ethical controls are also crucial. AI’s advances and potential uses are vast. They promise to revolutionize many sectors. These include healthcare, transportation, finance, and research. However, unlocking this potential responsibly needs a strong foundation. This foundation is built on trust and security.

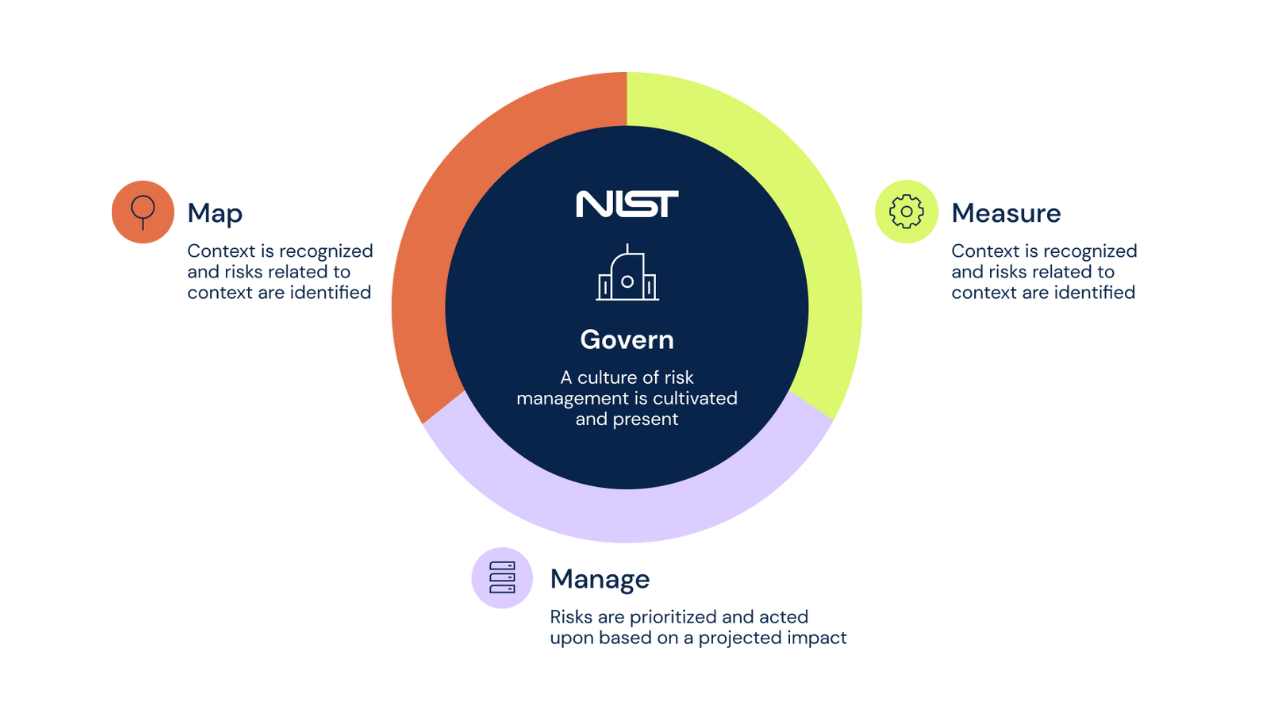

These NIST AI cyber controls provide this foundation. They offer a systematic approach for organizations. This helps assess, manage, and mitigate AI-specific cybersecurity risks. This covers the entire AI lifecycle. From design to development, deployment, and decommissioning. This proactive stance is essential. It prevents costly breaches. It safeguards sensitive information. It maintains public confidence in AI systems. Clear guidelines from NIST reduce uncertainty. This uncertainty might otherwise hinder AI adoption. Especially in risk-averse environments.

These controls are also dynamic. They will evolve as AI technology progresses. New threats will emerge. NIST’s collaborative work involves input from industry, academia, and government. This ensures frameworks remain relevant and effective. Continuous adaptation is vital in AI. Breakthroughs can rapidly change the risk landscape.

The unveiling of NIST AI cyber controls is a milestone. It’s a global effort to manage artificial intelligence. It directly responds to AI’s multifaceted challenges. These include technical vulnerabilities and governance complexities. They also cover societal implications. Experts like Jonathan Zittrain highlight thoughtful governance. Governments navigate AI deployment hurdles. NIST’s framework offers a crucial blueprint. It ensures AI’s future is innovative, secure, and ethical. Ongoing dialogue and collaboration are instrumental. They will shape an AI landscape benefiting humanity. They also mitigate inherent risks.

Frequently Asked Questions

What are the new NIST AI cyber controls?

The National Institute of Standards and Technology (NIST) has released specific cybersecurity controls. These are tailored for artificial intelligence (AI) systems. They aim to address unique AI vulnerabilities and risks. This includes machine learning and data dependencies.

Why are these NIST AI cyber controls important now?

AI is rapidly evolving into practical applications. This creates an urgent need for robust security frameworks. Governments and experts are concerned about AI governance. The new controls provide guidance. They help manage risks while promoting responsible innovation.

How do these controls impact AI adoption in various sectors?

The NIST AI cyber controls offer a systematic approach. Organizations can use them to assess and mitigate AI-specific cybersecurity risks. This applies throughout the entire AI lifecycle. From design to development, deployment, and decommissioning. It helps prevent breaches and build confidence. This allows for secure and ethical AI adoption across industries.